Historically, the first multimodal system dates back to the early 80s and since then considerable progress has been made. Multimodal systems process two or more modes of user input (such as voice commands, gestures or gaze) in a coordinated manner with multimedia system output. Research has focused mostly on multimodal input, whereas output, or multimodal feedbacks, have been only recently investigated. This project therefore offers a starting point for further development of this field of research, and more importantly, it allows to close the interaction cycle with technologies. Users don’t simply interact with a computer, but computer also interact back with users.

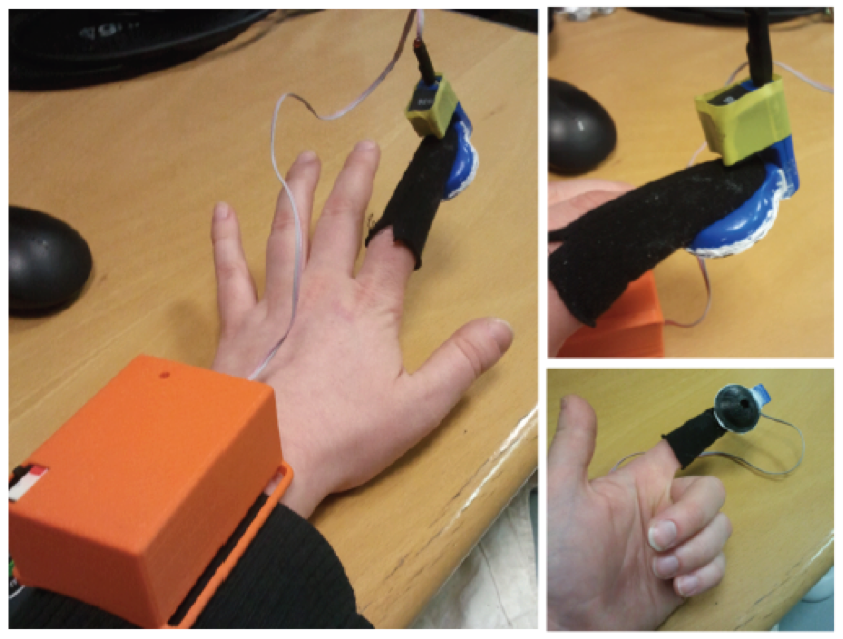

The feedbacks, or output modalities, I relied on in this project are were: visual, audio and tactile. Tactile feedback was provided through a prototype glove worn on the index finger and that generates a pulse wave signal on the fingertip. Haptic signals were generated modifying only the pressure time and intensity level. A total of 3 experiments were conducted.

Given the novelty of the glove used, the first two studies were designed to evaluate the perception of the stimuli created by the glove: the first one evaluated how different levels of intensity were perceived in a series of stimuli with equal duration; while the second one investigated how distinguishable two stimuli with equal intensity but varying duration were. Based on the results of the experiments 1 and 2, two tactile stimuli were created to use as tactile feedback in the third experiment.

The third experiment evaluated the user experience in response to the multimodal feedback. In this case, the user interacted with a virtual kitchen projected on a TV at 2m distance. A Kinect camera registered the body movements of the user. Users had to open and close the right door of the refrigerator and the oven door. For each of these interactions (opening and

The third experiment evaluated the user experience in response to the multimodal feedback. In this case, the user interacted with a virtual kitchen projected on a TV at 2m distance. A Kinect camera registered the body movements of the user. Users had to open and close the right door of the refrigerator and the oven door. For each of these interactions (opening and  closing of the appliances) the users received one of seven combinations of multimodal feedback (audio only, visual only, haptic only, audio-visual, audio-haptic, visual-haptic, audio-visual-haptic). Interactions were video-recorded and at the end, participants were asked to fill out some questionnaires to investigate the preferred order of the different feedback conditions, also in relation to the hand that wore the glove for tactile feedback. The most preferred feedback was the trimodal one, while the least preferred was found to be the visual one. We also used an adapted version of the Microsoft Desirability Toolkit questionnaire.

closing of the appliances) the users received one of seven combinations of multimodal feedback (audio only, visual only, haptic only, audio-visual, audio-haptic, visual-haptic, audio-visual-haptic). Interactions were video-recorded and at the end, participants were asked to fill out some questionnaires to investigate the preferred order of the different feedback conditions, also in relation to the hand that wore the glove for tactile feedback. The most preferred feedback was the trimodal one, while the least preferred was found to be the visual one. We also used an adapted version of the Microsoft Desirability Toolkit questionnaire.

All together, findings show that the visual feedback, visual-audio feedback, and trimodal feedback give shortest reaction times. These conclusions are in line with what we also find commonly in our technological devices, which now not only provide audio and visual feedback, but provide more and more commonly also tactile feedback, such as in out smartphones or in game consoles. The addition of tactile feedback, and thus the use of a trimodal combination, was most appreciated in this kind of touchless interaction, as it resembled real-world interactions with physical objects.

Publication:

Marta E. Cecchinato. User Experience Evaluation in Multimodal Interaction. MSc Dissertation. Faculty of General Psychology. Università degli Studi di Padova.

Primary Supervisor: Luciano Gamberini. Secondary Supervisor: Giulio Jacucci.